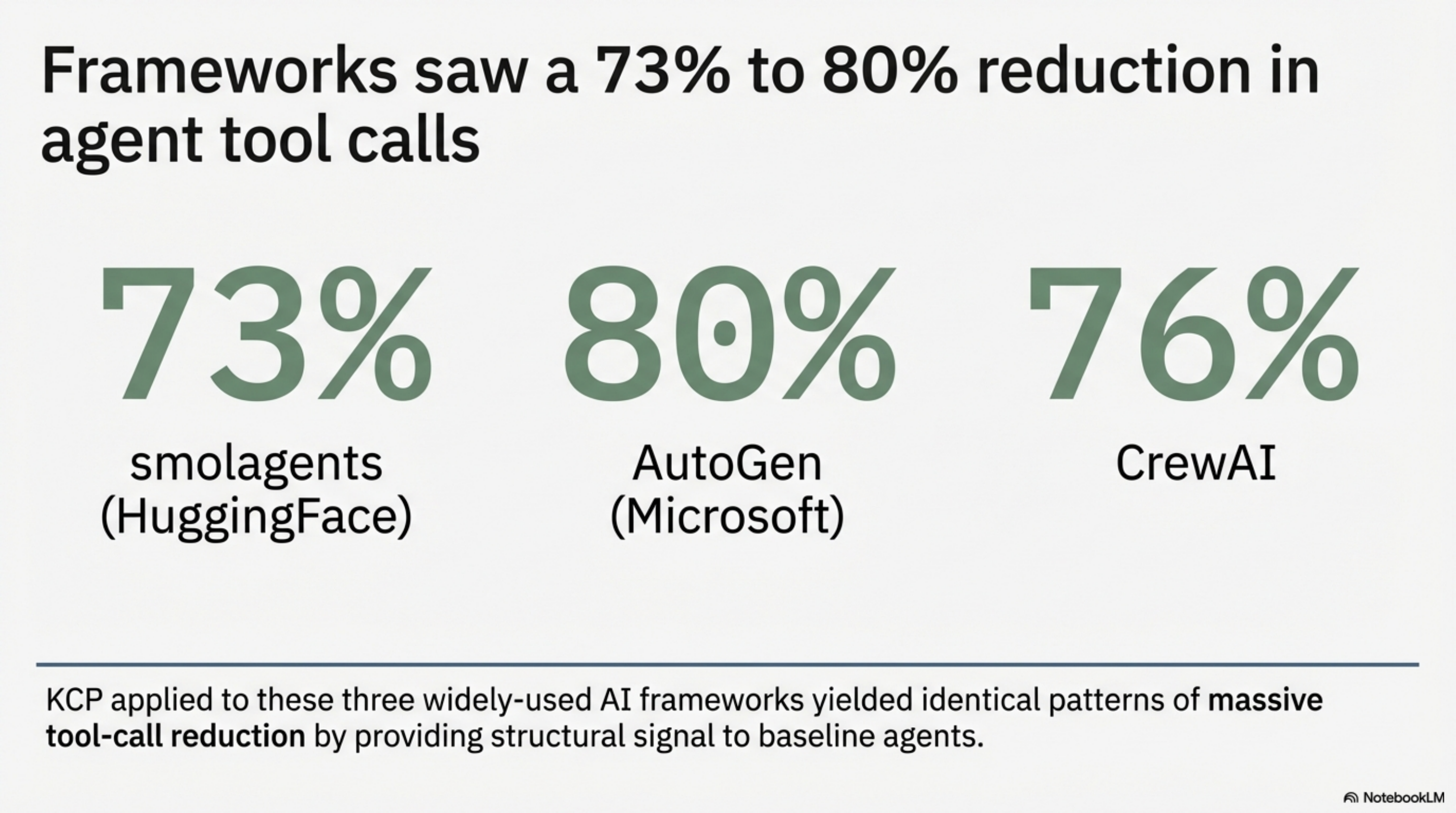

KCP on Three Agent Frameworks: Same Pattern, Bigger Numbers

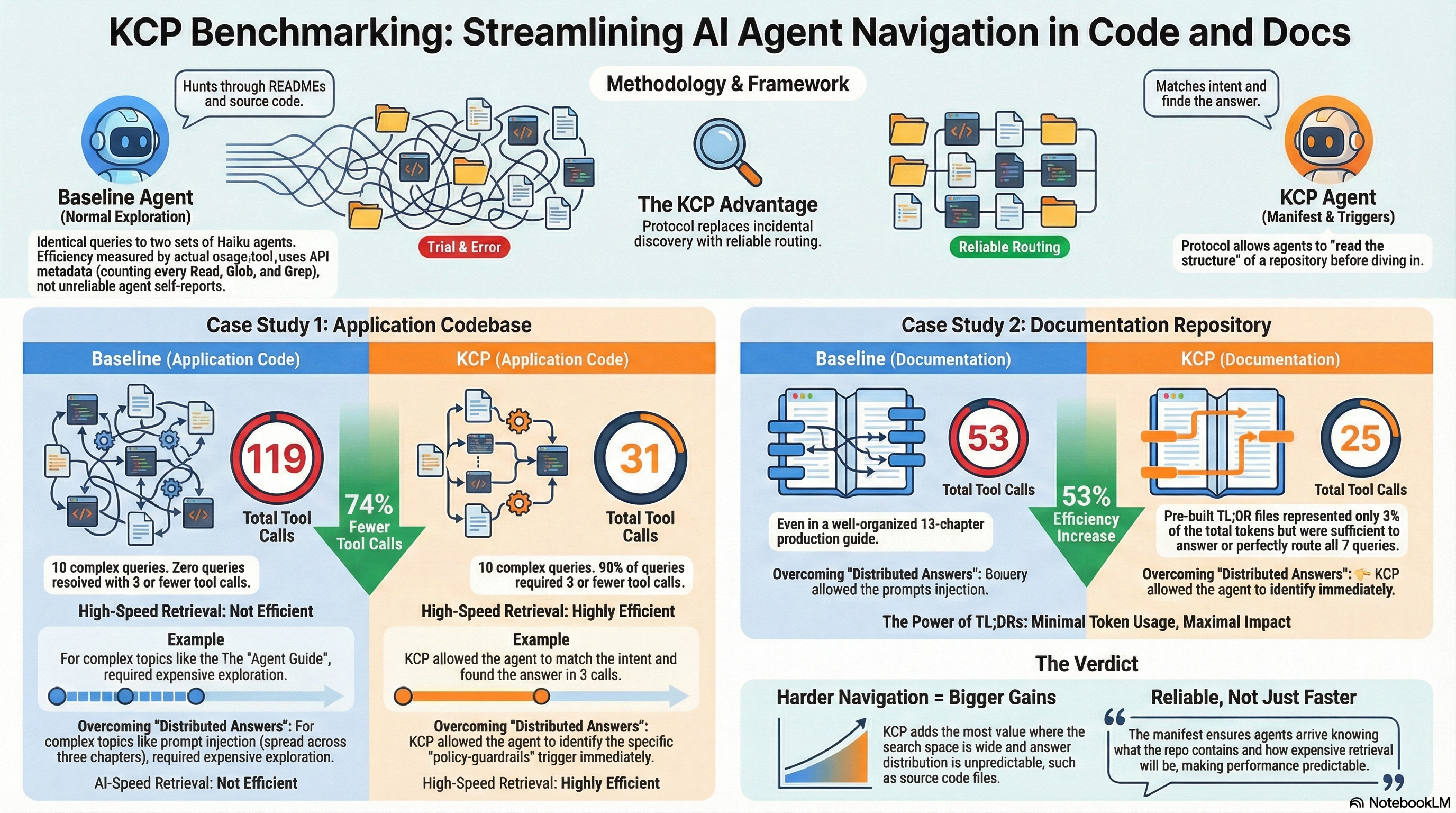

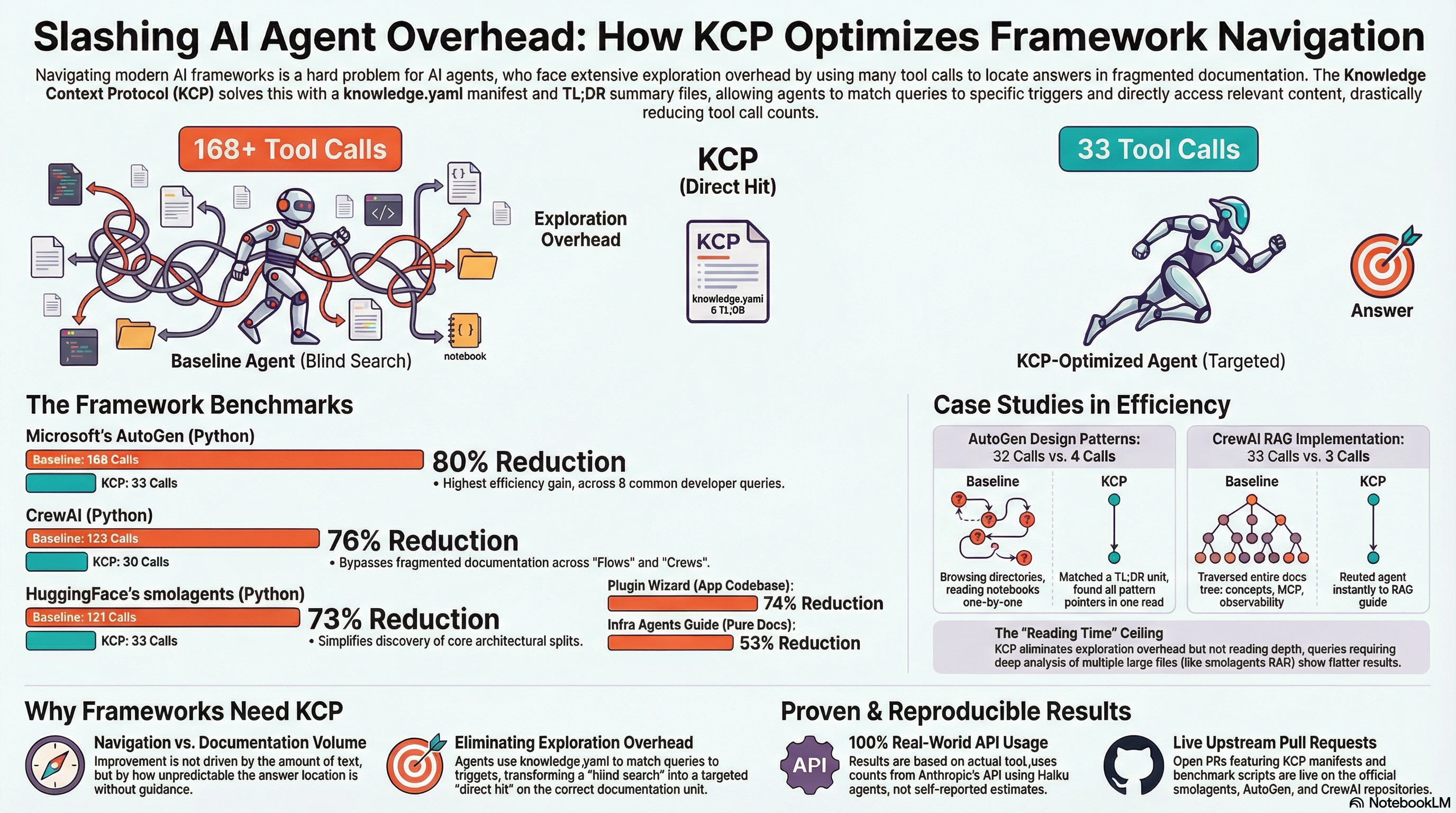

Today we applied KCP to three of the most widely-used AI agent frameworks — smolagents (HuggingFace, 25K stars), AutoGen (Microsoft, 55K stars), and CrewAI (44K stars). All three got the same treatment: a knowledge.yaml manifest, pre-built TL;DR summary files for the highest-traffic sections, and a before/after benchmark using the same model and methodology.

The results: 73%, 80%, and 76% reductions in agent tool calls. Open PRs are live on all three repositories.